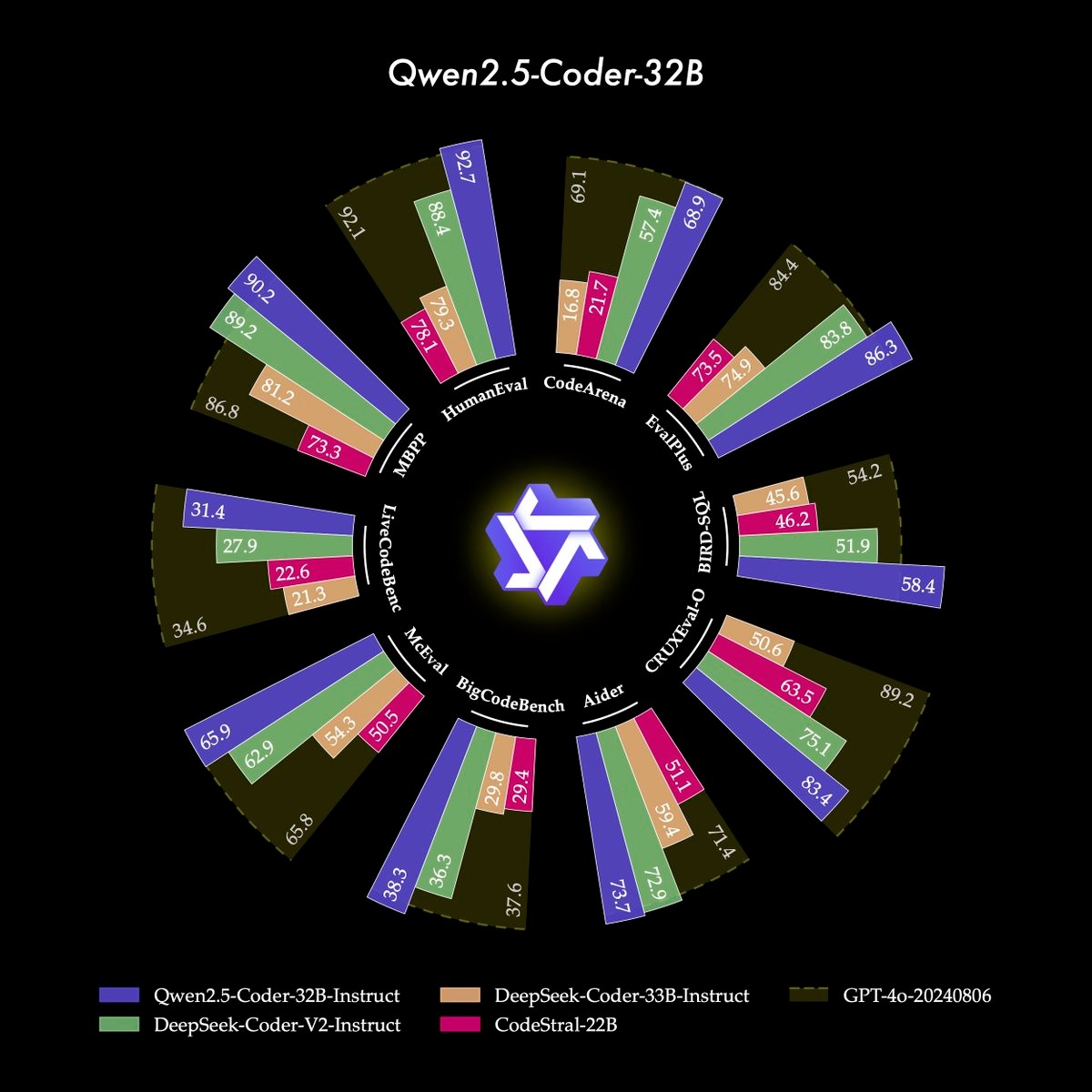

Qwen2.5-Coder-14B-Instruct is a powerful language model provided by Hugging Face , designed for programming tasks and code generation. This model is based on the Qwen architecture, combines powerful code understanding and generation capabilities, and supports natural language instruction (Instruct) format. It is designed to improve programming efficiency and help developers complete code writing, problem solving, and code optimization.

Large scale and powerful functions : Qwen2.5-Coder-14B-Instruct is a 14B parameter model with strong language understanding and generation capabilities, capable of understanding complex programming tasks and generating high-quality code.

Multi-language support : It not only supports common programming languages (such as Python, JavaScript, Java, C++, etc.), but also supports multiple development frameworks.

Natural language instructions : Allow users to interact with natural language instructions, and the model can generate corresponding code based on the description entered by the user.

Intelligent code completion and optimization : In addition to generating code, it can also optimize and repair existing code.

1. Install dependencies

First, you need to install transformers and torch libraries, which are necessary to interact with the Hugging Face API:

pip install transformers torch

2. Load the model

Load the model using Hugging Face's transformers library:

from transformers import AutoModelForCausalLM, AutoTokenizer # Load model and tokenizer model_name = "hf-models/Qwen2.5-Coder-14B-Instruct" model = AutoModelForCausalLM.from_pretrained(model_name) tokenizer = AutoTokenizer.from_pretrained(model_name) # Set up the device (if there is a GPU, use the GPU) device = "cuda" if torch.cuda.is_available() else "cpu" model.to(device)

3. Write simple code generation functions

You can generate code through the model.generate() method. For example, let the model generate a simple Python function that calculates the sum of two numbers:

def generate_code(prompt): inputs = tokenizer(prompt, return_tensors="pt").to(device) # Use the model to generate code and set the maximum generation length outputs = model.generate(inputs['input_ids'], max_length=150, num_return_sequences=1, do_sample=True) #Decode the generated code generated_code = tokenizer.decode(outputs[0], skip_special_tokens=True) return generated_code # Example natural language command prompt = "Write a Python function that adds two numbers and returns the result." generated_code = generate_code(prompt) print(generated_code)

4. Adjust model output

You can adjust the generated content as needed, such as setting the generated code length, number of samples, temperature (to control randomness), etc.:

outputs = model.generate( inputs['input_ids'], max_length=200, # Set the maximum length of generation num_return_sequences=1, # Generate 1 result temperature=0.7, # Control randomness top_p=0.9, # Control the diversity of generation do_sample=True # Generate using sampling method)

5. Output the generated code

The generated code can be decoded and printed via tokenizer.decode() . Typically, models automatically write efficient code based on given instructions:

generated_code = tokenizer.decode(outputs[0], skip_special_tokens=True)

print("Generated Code:n", generated_code)Suppose you wish to generate a more complex program, such as a simple web crawler:

prompt = """

Write a Python program that uses the BeautifulSoup library to scrape a webpage.

The program should extract all the links (anchor tags) from the page and print them.

"""

generated_code = generate_code(prompt)

print("Generated Web Scraping Code:n", generated_code)This code example asks the model how to create a simple web crawler and writes code based on the results generated by the model.

Qwen2.5-Coder-14B-Instruct is a powerful code generation model that can generate codes in various programming languages based on natural language instructions.

Using the transformers library provided by Hugging Face, you can easily load and interact with the model and quickly generate code.

You can customize the model's output, including setting the generation length, sampling method, etc., to meet specific needs.

With simple code examples, you can quickly get up to speed and start using the model to help with your own programming tasks.