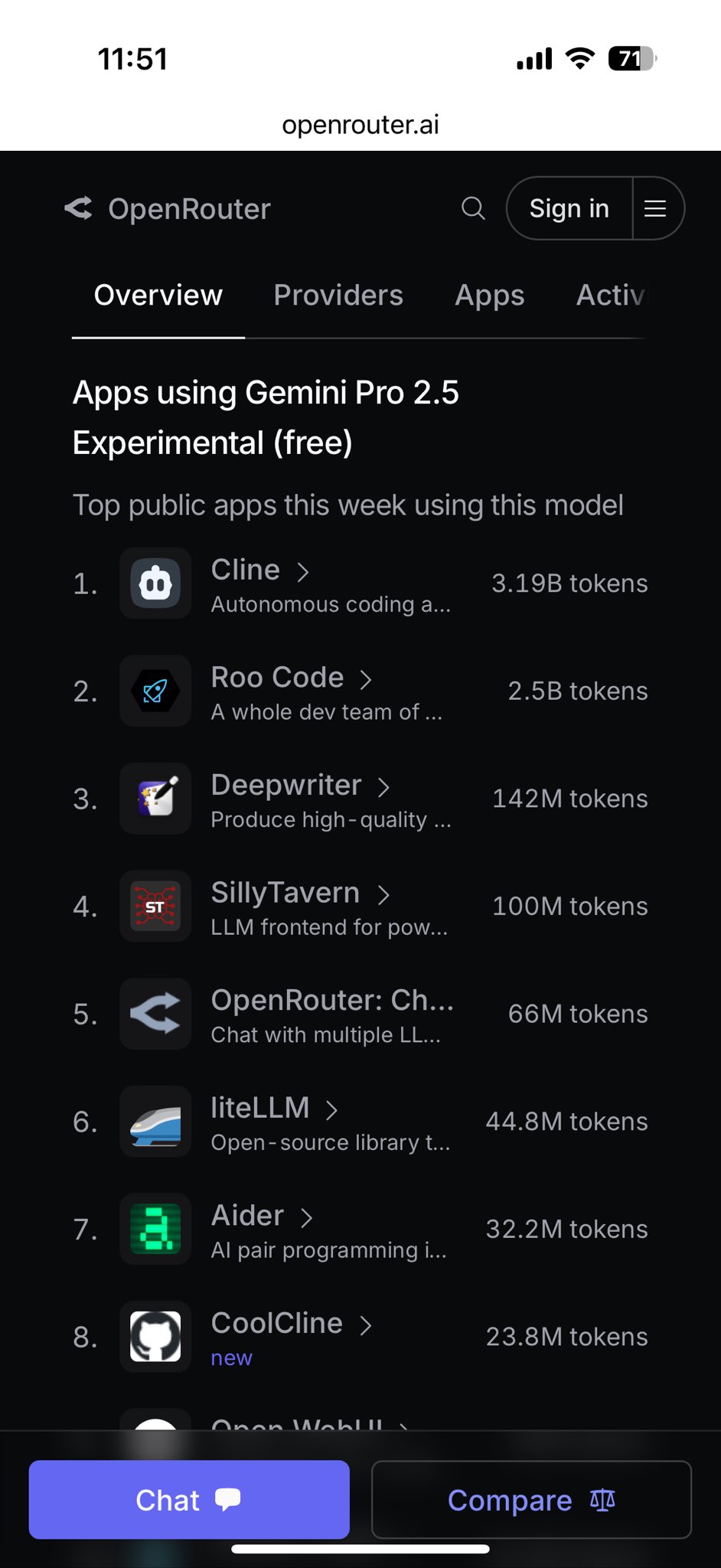

March 28, 2025 News, with the rapid development of artificial intelligence technology, the Gemini 2.5 Pro model launched by Google DeepMind has attracted much attention for its excellent performance and multimodal capabilities. Today, Cline, a well-known AI development tool, announced that it has officially supported Gemini 2.5 Pro, providing developers with a free and powerful option, further promoting AI-driven coding and debugging efficiency. The news has sparked widespread discussion in the technology community.

Cline is a popular AI-assisted development tool, usually integrated in development environments such as VSCode, helping developers quickly generate code, manage projects, and even debug complex problems through natural language instructions. According to the latest news, the Cline team released an update on March 27, adding support for Gemini 2.5 Pro. This update not only enriches Cline's model selection, but also brings users a more efficient development experience.

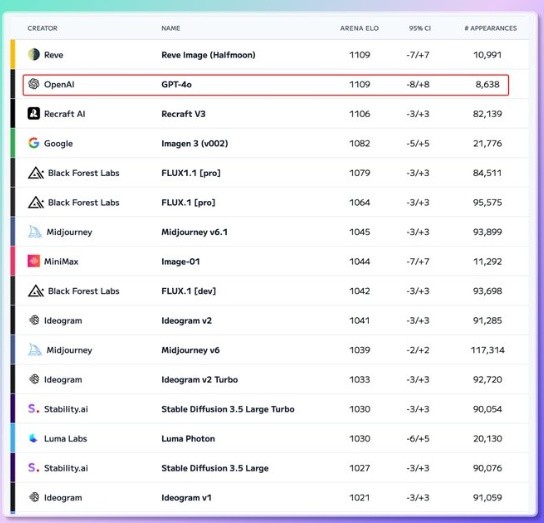

Gemini 2.5 Pro is Google's latest experimental AI model, known as the "smarest model to date". It has a context window of up to 1 million tokens (which will be expanded to 2 million in the future), supports a variety of modal inputs such as text, images, audio, and video, and performs well in benchmarks in fields such as coding, mathematics, and science. Especially in code-related evaluations such as SWE-Bench Verified, the Gemini 2.5 Pro ranks at the top with 63.8%, second only to Anthropic's Claude 3.7 Sonnet.

Cline has officially released detailed instructions on how to use Gemini 2.5 Pro for free in Cline. According to reports, users only need to update Cline to the latest version and select Gemini 2.5 Pro as the model in the settings to experience its features for free through API providers such as Google AI Studio or OpenRouter. This move lowers the barriers to use by developers, especially for users who need to deal with large code bases or complex multi-step tasks. The long context and reasoning capabilities of Gemini 2.5 Pro are undoubtedly a great blessing.

After the news was released, the developer community responded quickly. Several technicians said that after trying the Gemini 2.5 Pro on Cline, the results were shocking. They specifically mentioned: "Large contexts will significantly improve the efficiency of construction and debugging, which is even better than Claude." This reflects the potential of Gemini 2.5 Pro in real-world applications, especially when long documents or multi-file projects are required.

Another developer pointed out that the update of Cline has added support for Gemini 2.5 Pro, and is currently available for free through both Google Gemini and OpenRouter. However, there are comments pointing to a small drawback: "both are currently slow." This may be related to the high computing demands of the model or the experimental phase of the API, but does not cut developers' expectations for their capabilities.

In addition, technology enthusiasts from Japan also commented: "The experimental version of Gemini 2.5 Pro is now available in the Cline-based tool via OpenRouter, which is really exciting!" This shows that the support update of Cline has attracted attention worldwide, especially among the multilingual developer community.

The highlight of Gemini 2.5 Pro lies in its "thinking" ability. Unlike traditional models that directly output answers, it can reason step by step before generating a response, ensuring that the results are more accurate and context-sensitive. This feature is particularly practical in the Cline scenario. For example, developers can upload the entire repository through Cline, let Gemini 2.5 Pro analyze and optimize the code, or generate a complete application from a single prompt.

As an independent AI tool, Cline itself emphasizes efficient code generation and project management. The addition of Gemini 2.5 Pro further enhances its capabilities, especially in the following aspects:

However, community feedback also mentioned some areas that need to be optimized. For example, some users reported that when Cline cooperates with Gemini 2.5 Pro, the response is sometimes too long and may even repeat the answers. These issues may need to be further tweaked in subsequent versions.

According to the official Cline guidelines, users can experience Gemini 2.5 Pro for free through the following steps:

It should be noted that since Gemini 2.5 Pro is still in the experimental stage, its API may be rate-limited (such as Google AI Studio free tier 50 requests per day), and response speeds may fluctuate due to network or server load.

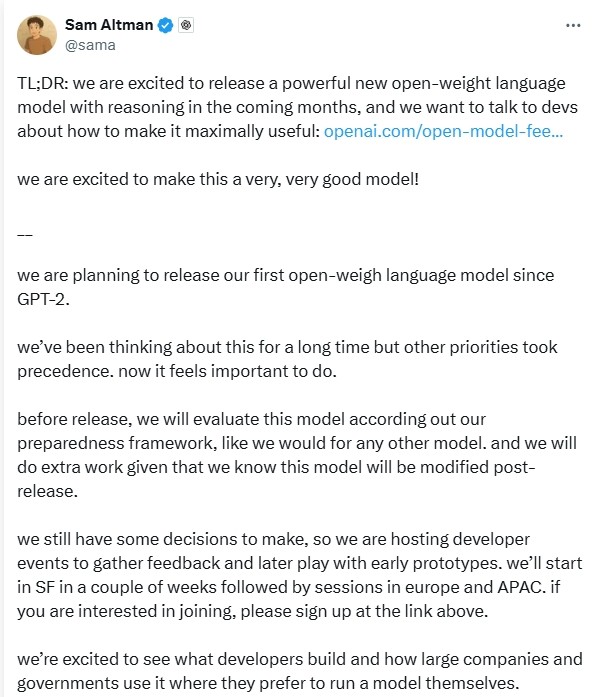

Cline's support for Gemini 2.5 Pro not only provides individual developers with free trial opportunities, but also sets a new benchmark for AI-powered software development. The super-large context window and multimodal capabilities of the Gemini 2.5 Pro make it more advantageous when dealing with complex tasks than competitors such as OpenAI's o3-mini (200K token context) or Anthropic's Claude 3.7 Sonnet (200K token context).

For businesses, this combination may bring the following value:

Nevertheless, the experimental nature of the Gemini 2.5 Pro means it is still being improved. The Cline team and Google DeepMind may further optimize model performance and tool integration through user feedback in the future.

Cline now supports Gemini 2.5 Pro, an update marks another deep integration of AI development tools and cutting-edge models. By providing this feature for free, Cline not only lowers the barriers for developers to access top-level AI technologies, but also paves the way for the widespread use of Gemini 2.5 Pro. The warm response from the development community shows that this combination has sparked a wave of expectations and exploration among developers around the world. Although the speed problem is yet to be solved, the long context, multimodal and reasoning capabilities of Gemini 2.5 Pro undoubtedly bring new possibilities to AI-assisted development. In the future, with the continuous optimization of Cline and Gemini 2.5 Pro, we have reason to look forward to the birth of more innovative applications.