Noam Brown, director of artificial intelligence research at OpenAI, said in a panel discussion at the Nvidia GTC conference recently that some forms of "inference" AI models could have appeared 20 years ahead of schedule, if researchers "know the right methods and algorithms" at the time. He pointed out that there are many reasons why this research direction is ignored.

Brown recalls his experience in gambling AI research at Carnegie Mellon University, including Pluribus, who beat the top human poker pro. He said the unique thing about the AI he helped create at the time was that it was able to “reason” the problem rather than relying on purely violent computing. Brown mentioned that humans spend a lot of time thinking in difficult situations, which may be very beneficial to artificial intelligence.

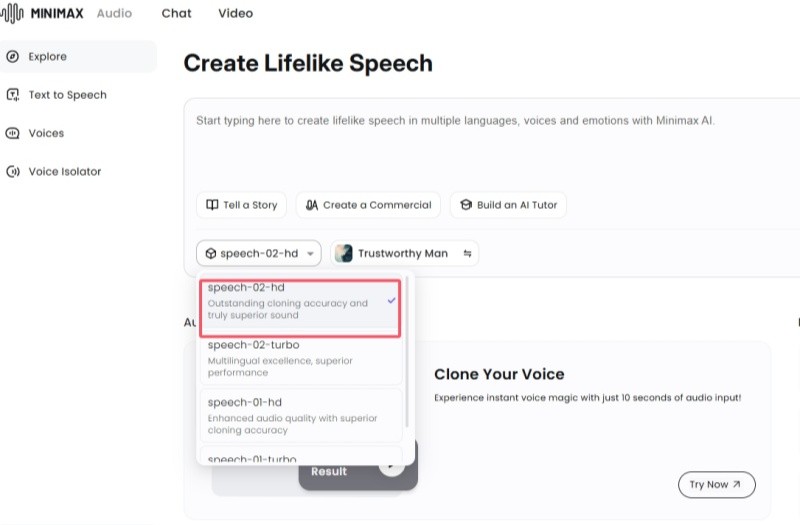

Brown is also one of the architects of OpenAI's AI model o1. The model uses a technique called "inference on test" that allows it to "think" before responding to a query. Test-time reasoning drives some form of “inference” by applying additional calculations to running models. Generally speaking, the so-called inference model is more accurate and reliable than traditional models, especially in fields such as mathematics and science.

In the panel discussion, when asked whether it is still possible for academia to conduct experiments of the scale like OpenAI given the widespread lack of computing resources in colleges and universities, Brown acknowledged that this has become more difficult in recent years as models have increased demand for computing resources. But he also pointed out that academics can play an important role by exploring areas with low computing requirements, such as model architecture design.

Brown stressed that there are opportunities for collaboration between cutting-edge labs and academia. He said Frontier Laboratories will focus on academic publications and carefully evaluate whether the arguments they put forward are sufficiently convincing, that is, whether the relevant research will be very effective if further expanded. If the paper makes a compelling argument, these laboratories will conduct in-depth research on this.

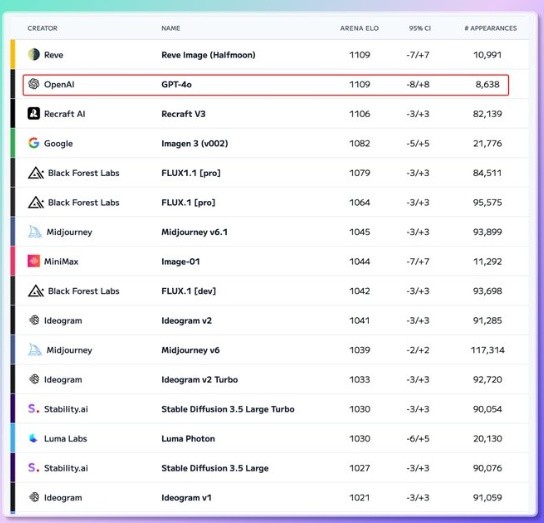

In addition, Brown also specifically mentioned the field of AI benchmarking, believing that academics can play an important role in it. He criticized the current status of AI benchmarks for being “very bad”, pointing out that these benchmarks tend to examine esoteric knowledge, whose scores are less relevant to the proficiency of tasks most people care about, leading to widespread misunderstandings about model capabilities and improvements. Brown believes that improving AI benchmarks does not require a lot of computing resources.

It is worth noting that in this discussion, Brown's initial remarks refer to his research on game AI before joining OpenAI, such as Pluribus, rather than inference models like o1.