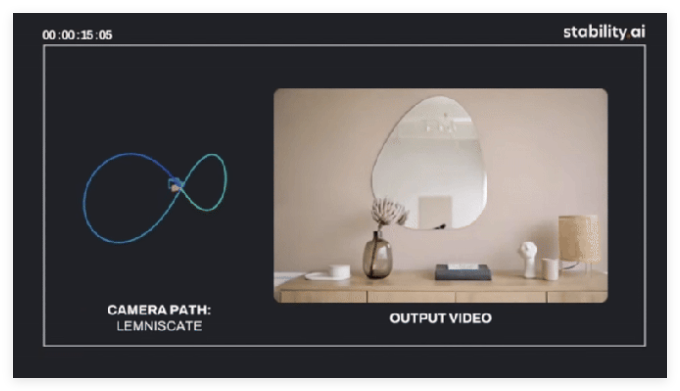

Stability AI has launched its latest AI model, Stable Virtual Camera, which is capable of converting 2D images into “immersive” videos and presenting realistic depth and perspectives. Virtual cameras are often widely used in digital film production and 3D animation. With Stable Virtual Camera, Stability AI hopes to incorporate the benefits of generative AI into it to provide users with greater control and customization space.

Stable Virtual Camera can generate a "new perspective" of the scene from one or more images (which can process up to 32), and users can also specify the camera angle. This model can generate videos that move along the "dynamic" camera path or preset path, including various effects such as "spiral", "zoom", "move", and "pan".

The current version of Stable Virtual Camera is a research preview version that can generate three aspect ratio videos: square (1:1), vertical (9:16) and horizontal (16:9), with up to 1,000 frames. However, Stability AI warns that the model may produce lower-quality results in some cases, especially when processing images containing humans, animals, or "dynamic textures" such as water surfaces.

Currently, Stable Virtual Camera is used in a non-commercial license on the Hugging Face platform for research and use, and users can download and experience it. Stability AI is the company behind Stable Diffusion, a popular image generation model. Despite its past management problems and financial difficulties, Stability AI received a new round of financing with investors backed last year in an effort to turn the situation around.

Recently, Stability AI hired a new CEO and invited film director James Cameron to the board. In addition, Stability AI has released several new image generation models, and in March this year, it cooperated with chip manufacturer Arm to launch an AI model that can generate audio and sound effects, aiming to bring these features to mobile devices running Arm chips.

Project entrance: https://top.aibase.com/tool/stable-virtual-camera