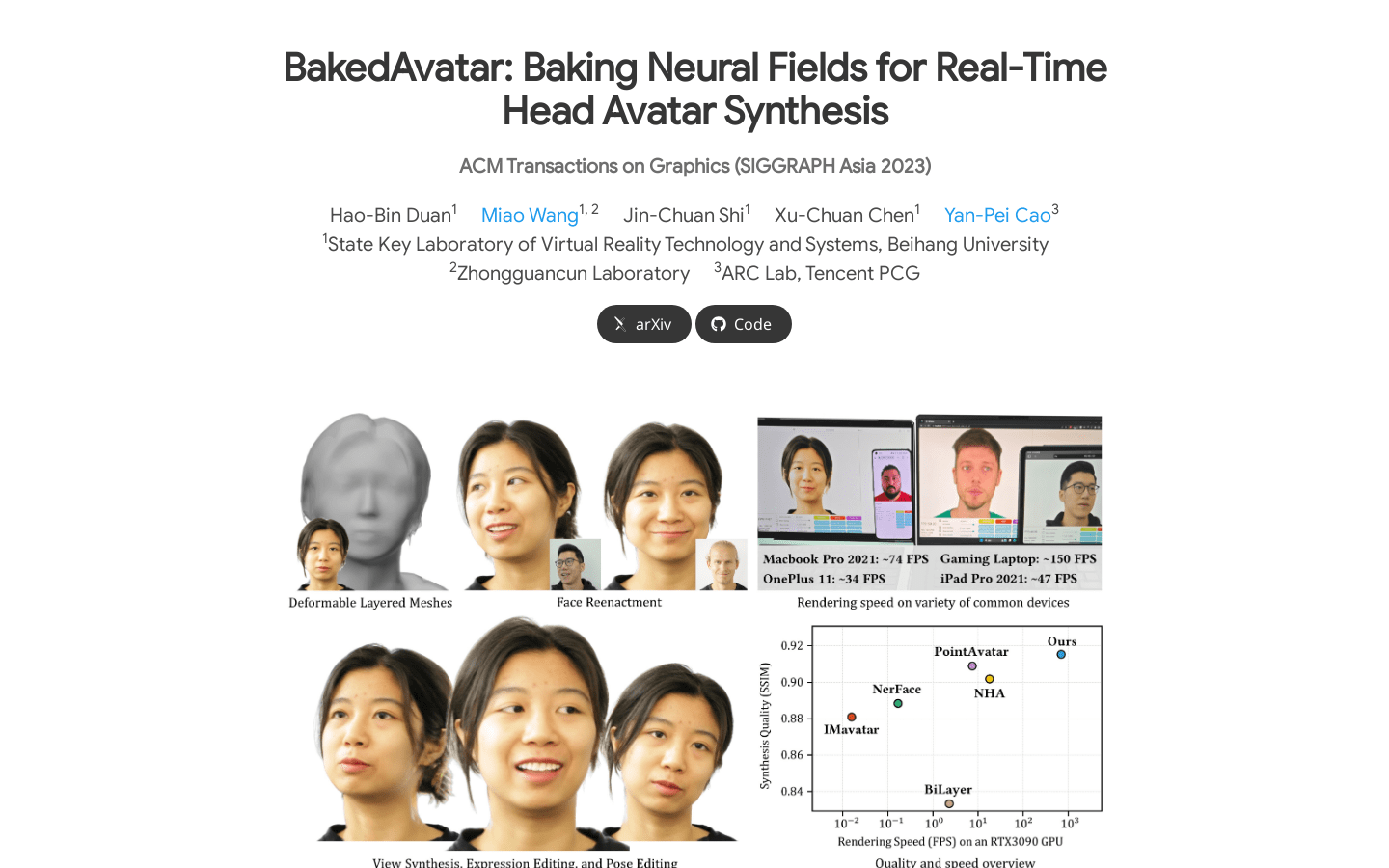

BakedAvatar is a new representation for real-time neural avatar synthesis that can be deployed in standard polygon rasterization pipelines. The method extracts deformable multi-layer meshes from learned head isosurfaces and computes expression, pose, and perspective-dependent appearances that can be baked into static textures, thereby providing support for real-time 4D avatar synthesis. We propose a three-stage neural avatar synthesis pipeline, including learning continuous deformations, manifolds, and radiation fields, extracting hierarchical meshes and textures, and fine-tuning texture details through differential rasterization. Experimental results show that our representation produces comprehensive results comparable to other state-of-the-art methods and significantly reduces the required inference time. We further demonstrate a variety of avatar synthesis results from monocular videos, including view synthesis, facial reconstruction, expression editing, and pose editing, all at interactive frame rates.

Demand group:

"Real-time avatar synthesis in VR/AR, telepresence, video games and other applications"

Example of usage scenario:

Real-time avatar synthesis in VR/AR applications

Avatar synthesis in video games

Real-time avatar synthesis in telepresence

Product features:

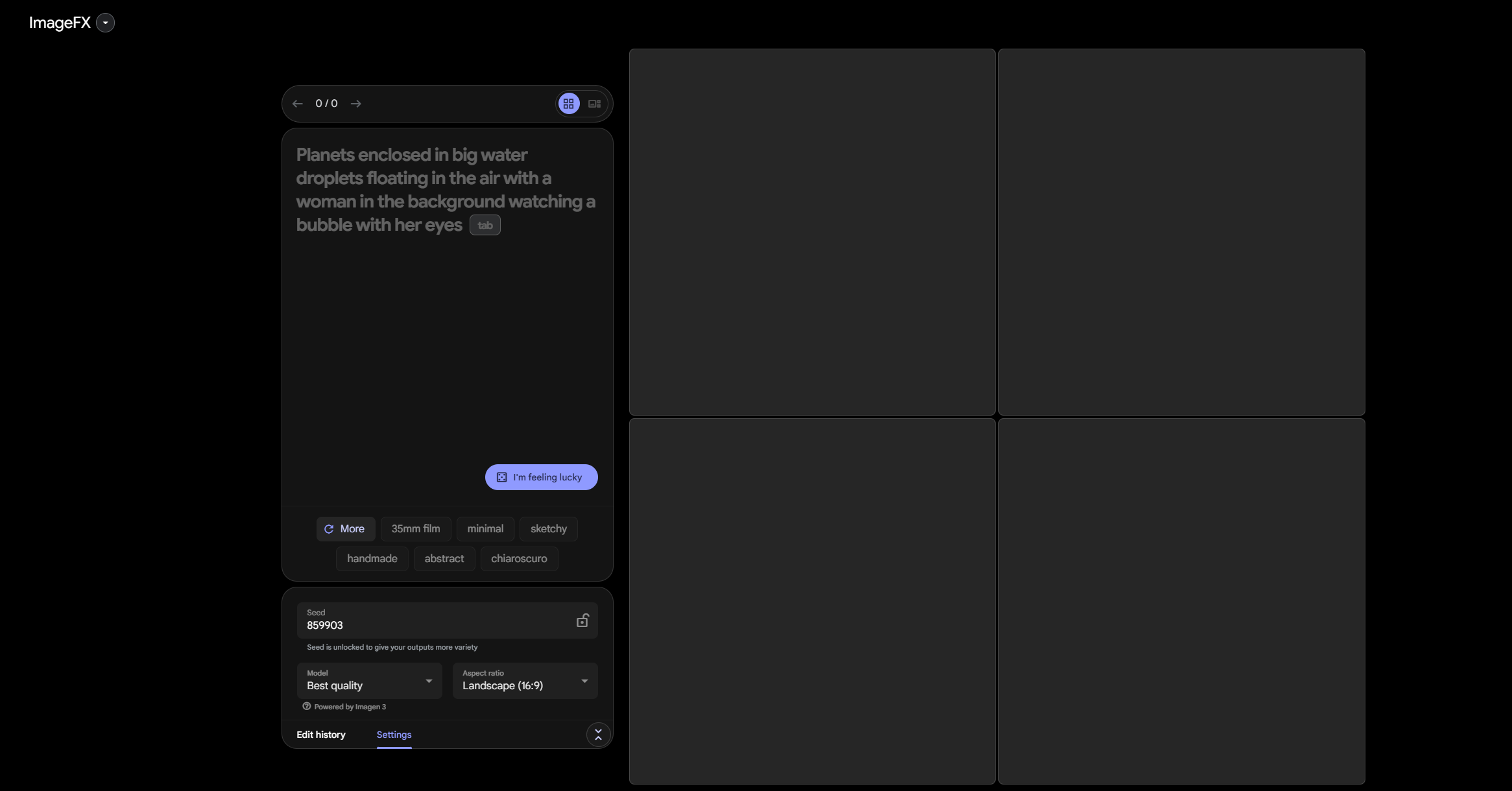

Learn about continuous deformations, manifolds, and radiation fields

Extract layered meshes and textures

Fine-tune texture details with differential rasterization