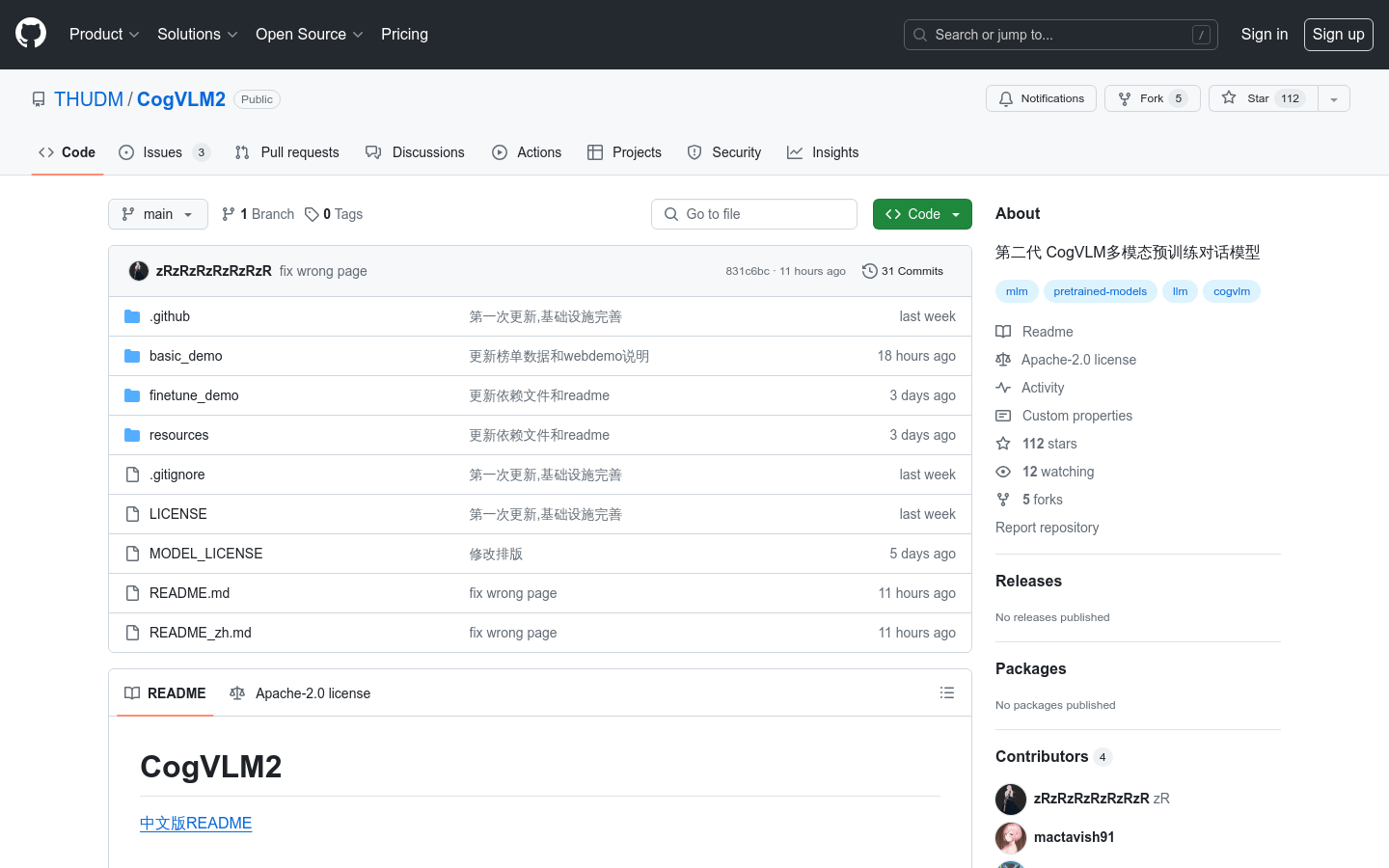

CogVLM2

CogVLM2 is a high-performance, multilingual, open-source model for multimodal dialog and image understanding, supporting long texts and high-resolution images.

What is CogVLM2?

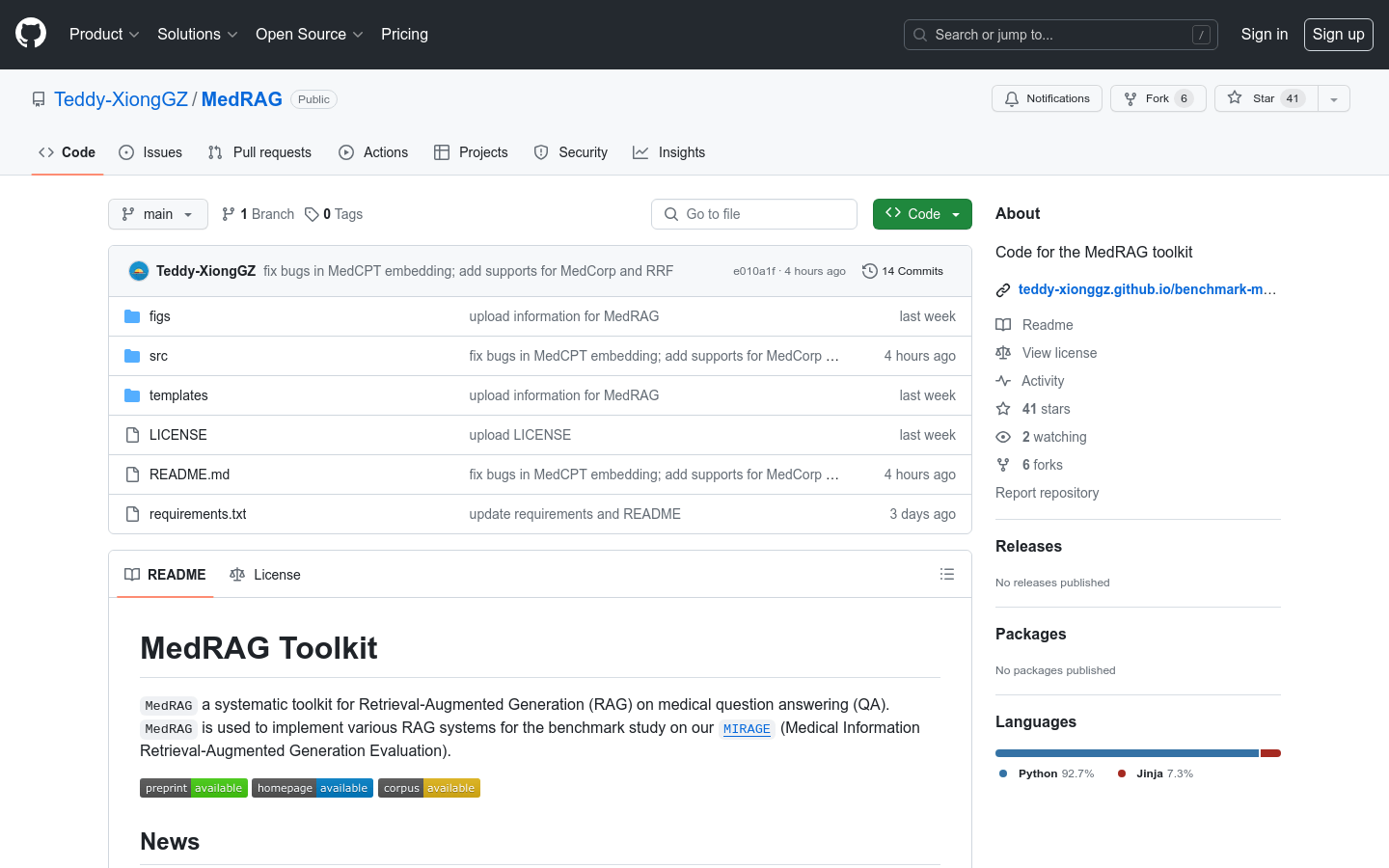

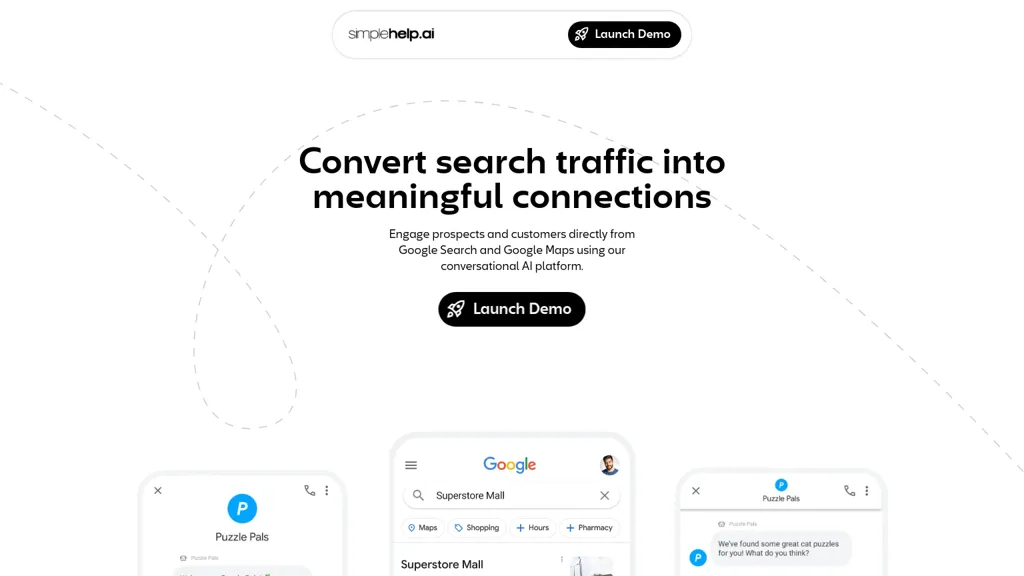

CogVLM2 is a cutting-edge multilingual multimodal pretraining dialogue model developed by Tsinghua University. It supports both Chinese and English, with high-resolution image processing up to 1344x1344 and text lengths of 8K. This open-source model excels in various benchmarks like TextVQA and DocVQA, offering significant performance improvements over its predecessor. It's ideal for researchers and developers working on intelligent systems in fields such as customer service, education, and healthcare.