What is Contrastive Preference Optimization in Machine Translation?

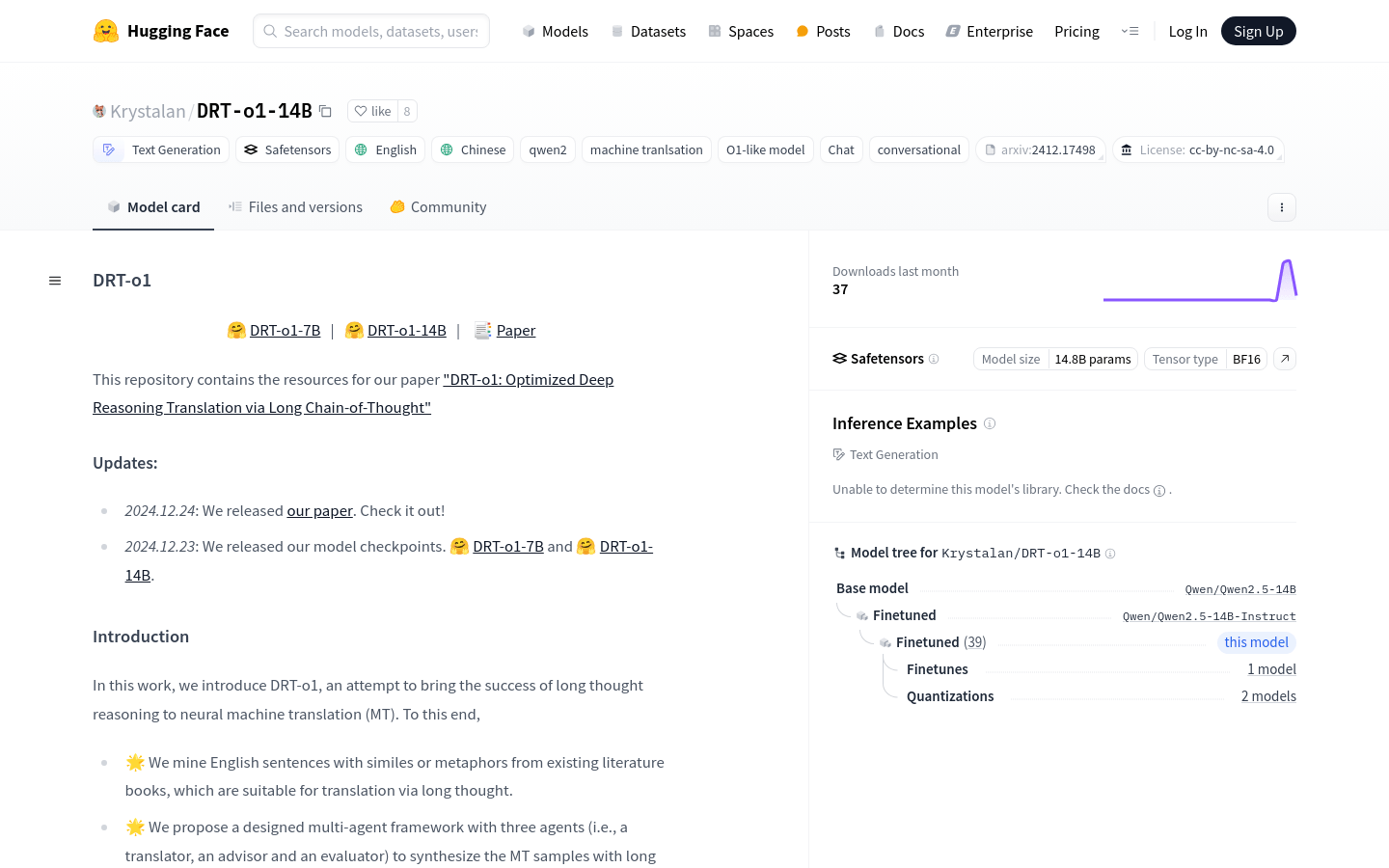

Contrastive Preference Optimization is an innovative method used in machine translation that improves model performance by training it to avoid generating translations that are merely adequate but not perfect. This approach enhances the ALMA model's effectiveness significantly. It has been shown to achieve or exceed the performance of winners from WMT competitions and even GPT-4 on datasets from WMT'21, WMT'22, and WMT'23.

Who Can Use Contrastive Preference Optimization?

This method is ideal for professionals and organizations working in machine translation aiming to boost their translation model’s performance and quality.

Where Can Contrastive Preference Optimization Be Applied?

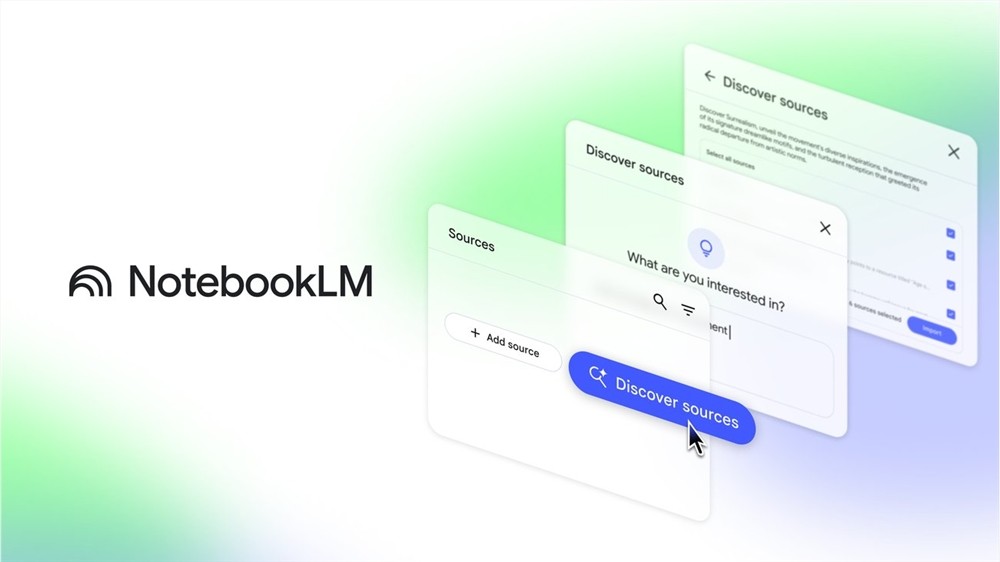

Enhance the capabilities of online translation platforms.

Improve enterprise-level machine translation systems.

Elevate translation quality in mobile applications.

Key Features:

Trains models using Contrastive Preference Optimization.

Boosts overall performance in machine translation tasks.