What is Dolphin R1?

Dolphin R1 is a dataset created by the Cognitive Computations team aimed at training inference models similar to DeepSeek-R1 Distill. The dataset includes 300,000 reasoning samples from DeepSeek-R1, 300,000 from Gemini 2.0 flash thinking, and 200,000 from Dolphin chat. This combination provides researchers and developers with extensive resources to enhance model reasoning and conversational capabilities. The development of this dataset was supported by several companies including Dria, Chutes, and Crusoe Cloud.

Dolphin R1 is suitable for researchers and developers in natural language processing, particularly those focusing on training reasoning models and developing conversational systems. It helps improve model performance, optimize user interaction, and explore new applications. Academic institutions and enterprises can also benefit from this valuable resource for cutting-edge research and innovative solutions.

Example Scenarios:

Use Dolphin R1 to train a reasoning model that improves accuracy in complex questions.

Develop an intelligent customer service system using Dolphin R1 to enhance user experience and problem-solving efficiency.

Conduct academic research based on Dolphin R1 to explore new methods and theories in natural language reasoning.

Key Features:

Provides high-quality reasoning samples for training and optimizing model inference abilities.

Includes diverse data sources covering various reasoning styles and conversation scenarios.

Supports large-scale model training to meet different research and development needs.

Data has been rigorously screened and cleaned for quality and consistency.

Comes with detailed documentation and guides to help users get started quickly.

Tutorial:

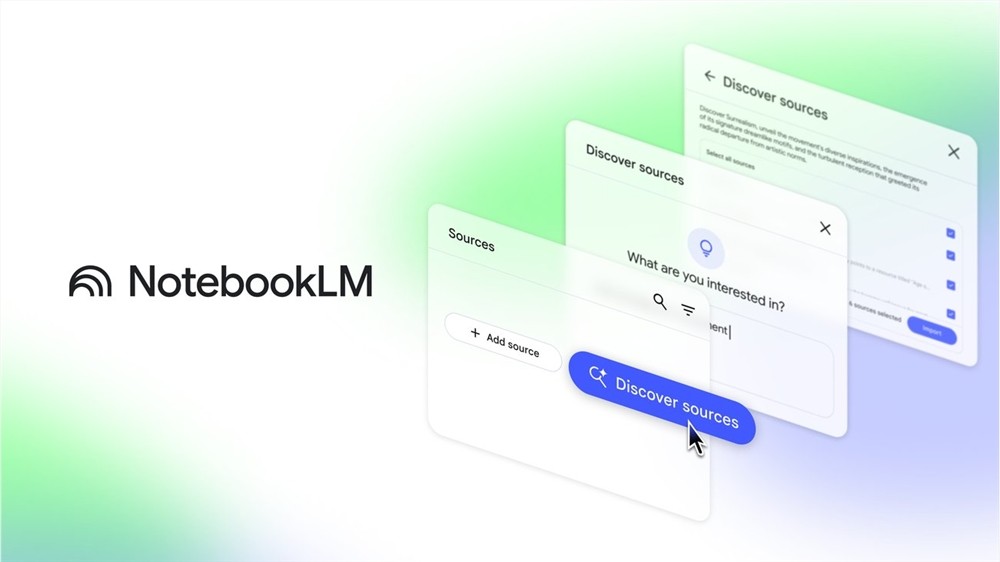

1. Download the Dolphin R1 dataset from the Hugging Face website.

2. Extract the dataset files and understand their structure and format.

3. Load the dataset using Python or another programming language for preprocessing and cleaning.

4. Split the dataset into training, validation, and test sets for model training and evaluation.

5. Choose a suitable model architecture like Transformer and start the training process.

6. Regularly assess model performance during training and adjust hyperparameters to optimize results.

7. Evaluate the final model using the test set to ensure its generalization ability.

8. Apply the trained model to real-world scenarios such as intelligent customer service or chatbots.