Fireworks collaborates with the world's leading generative AI researchers to deliver the best models at the fastest speed. With Fireworks carefully filtered and optimized models, as well as enterprise-level throughput and professional technical support. Positioned as the fastest and most reliable AI platform.

Demand population:

"For developers to build AI applications, model reasoning and enterprise-level AI capabilities"

Example of usage scenarios:

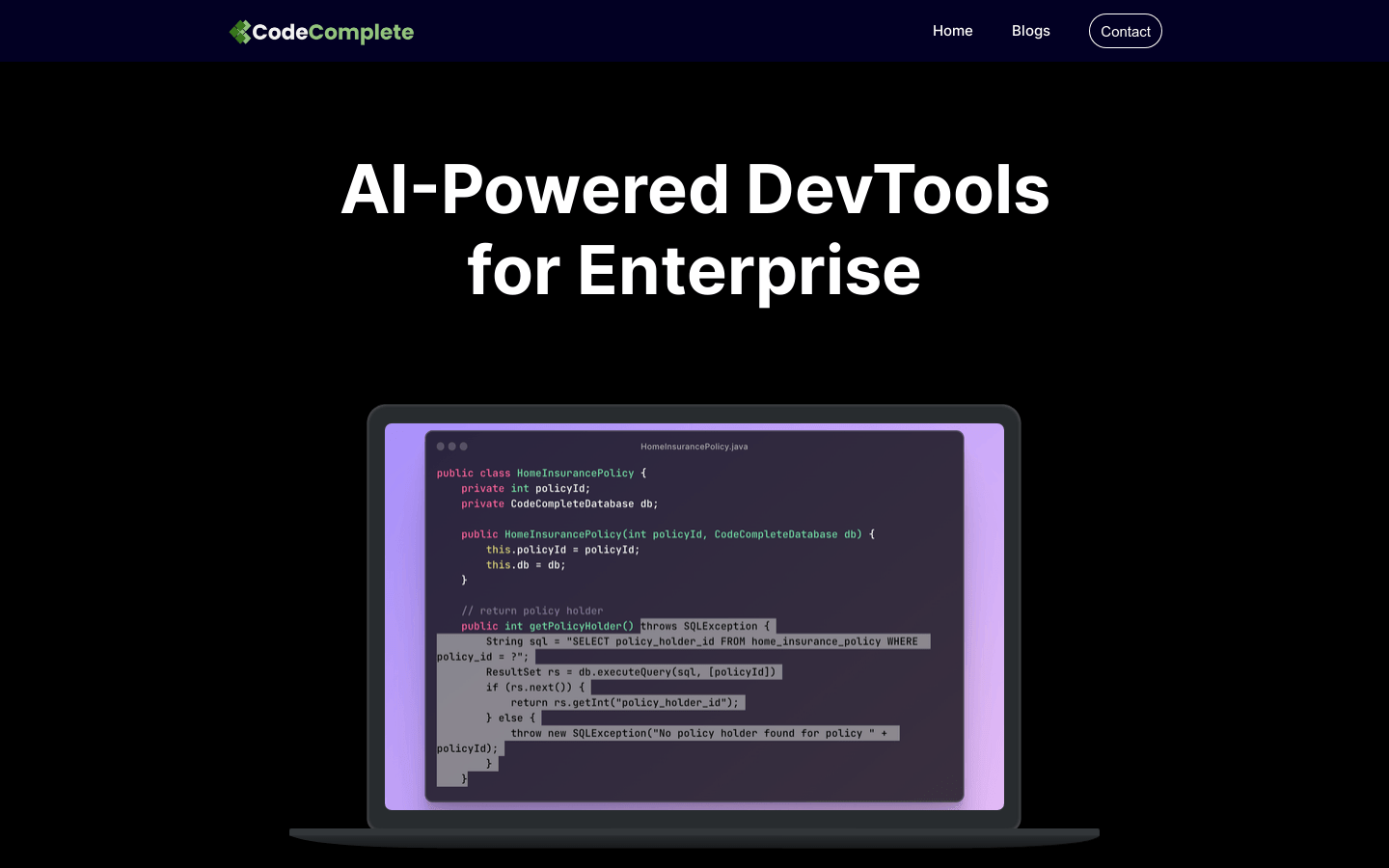

Chat LLM Model Inference

FireFunction V1 Model Inference

Llama 2 70B Chat Model Reasoning

Product Features:

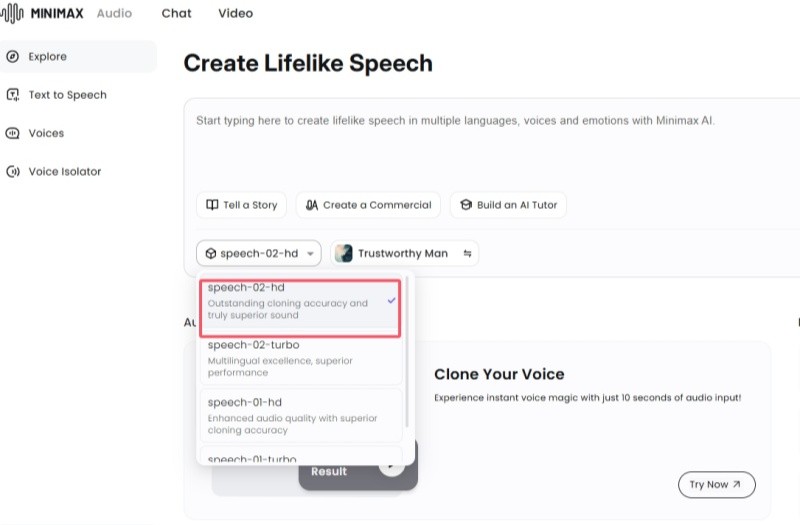

Provide Chat LLM, Mixtral MoE 8x7B Instruct, FireFunction V1, Llama 2 70B Chat and other models

The fastest reasoning provider

Work with Fireworks or home-trained multimodal and function call models

Excellent code debugging and AI assistant functions