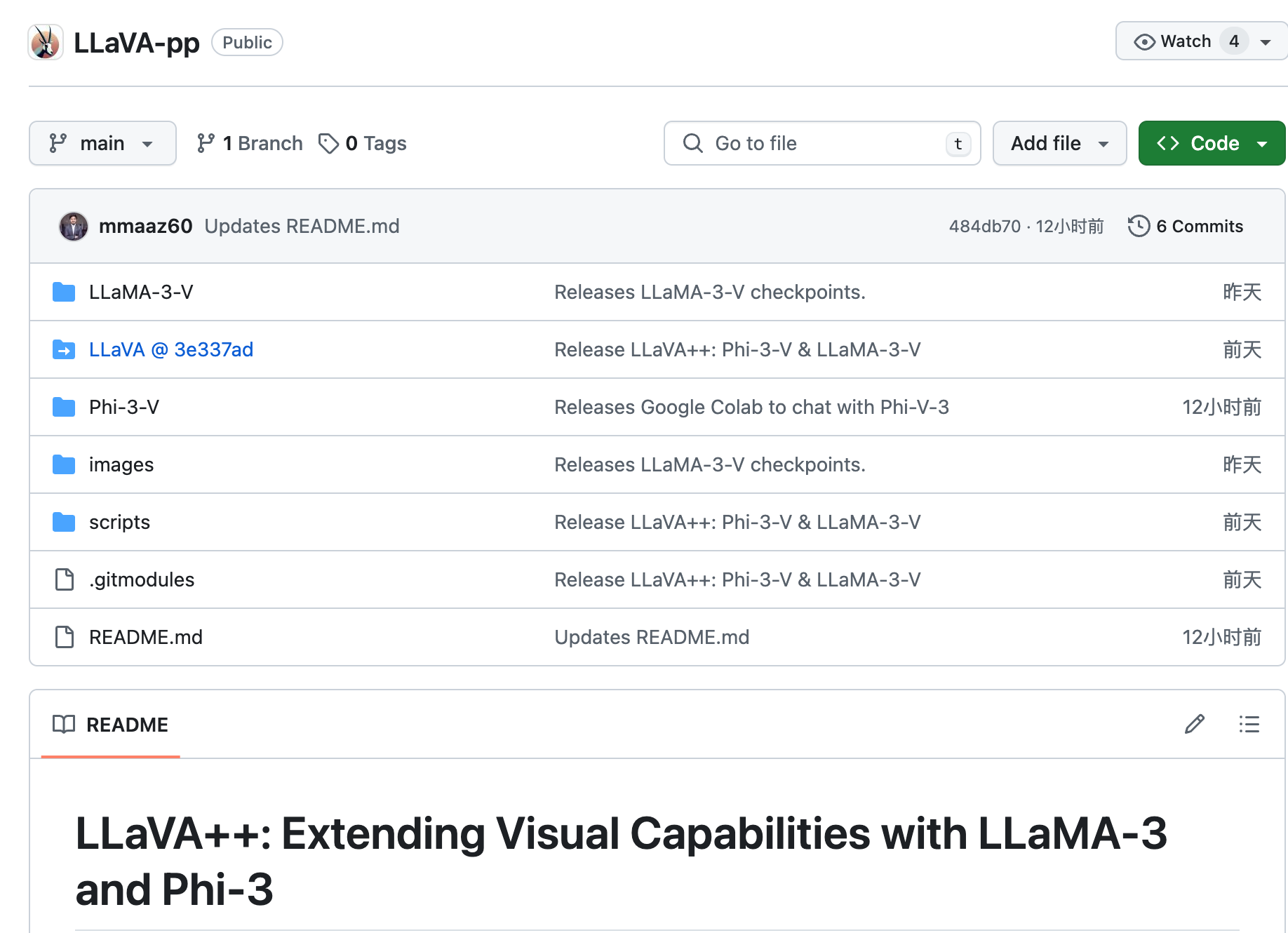

LLaVA++ is an open source project aimed at extending the visual capabilities of the LLaVA model by integrating Phi-3 and LLaMA-3 models. Developed by researchers at Mohamed bin Zayed University of AI (MBZUAI), the project enhances the model’s performance on both the instructions-complying and academic task-oriented datasets by combining the latest large language models.

Demand population:

["Researchers and developers can use LLaVA++ for research and development of language models.","Fitable for commercial applications that require language understanding and generation tasks.","The model can be used for language teaching and research in the field of education.","It is of great significance for exploring the application of artificial intelligence in the field of vision and language integration."]

Example of usage scenarios:

In the field of education, LLaVA++ can be used to assist language learning and provide accurate language comprehension and generation.

In commercial applications, LLaVA++ can be integrated to improve the intelligence level of customer service systems.

Research institutions can use LLaVA++ to conduct academic research on language models and publish related papers.

Product Features:

Integrate the Phi-3 Mini Instruct and LLaMA-3 Instruct models to improve language comprehension capabilities.

Performance comparisons were performed on multiple benchmarks and datasets, demonstrating the advantages of the model.

Pre-trained models and LoRA weight fine-tuning models are provided to suit different usage scenarios.

Provide an interactive chat experience with Google Colab.

Supports pre-training and fine-tuning of models to optimize performance for specific tasks.

Detailed installation and training instructions are provided for easy use by researchers and developers.

Tutorials for use:

Step 1: Visit the GitHub project page, clone or download the code base of LLaVA++ .

Step 2: Follow the project's installation guide and update the necessary dependency packages by running the provided scripts.

Step 3: Select the pre-trained model or fine-tune the model as needed to adapt to specific application scenarios.

Step 4: Experience the interactive chat function of the model using the provided Google Colab link.

Step 5: Perform model training and testing and evaluate model performance according to project documentation and guidelines.

Step 6: Integrate the trained model into your own application to implement the required language processing functions.