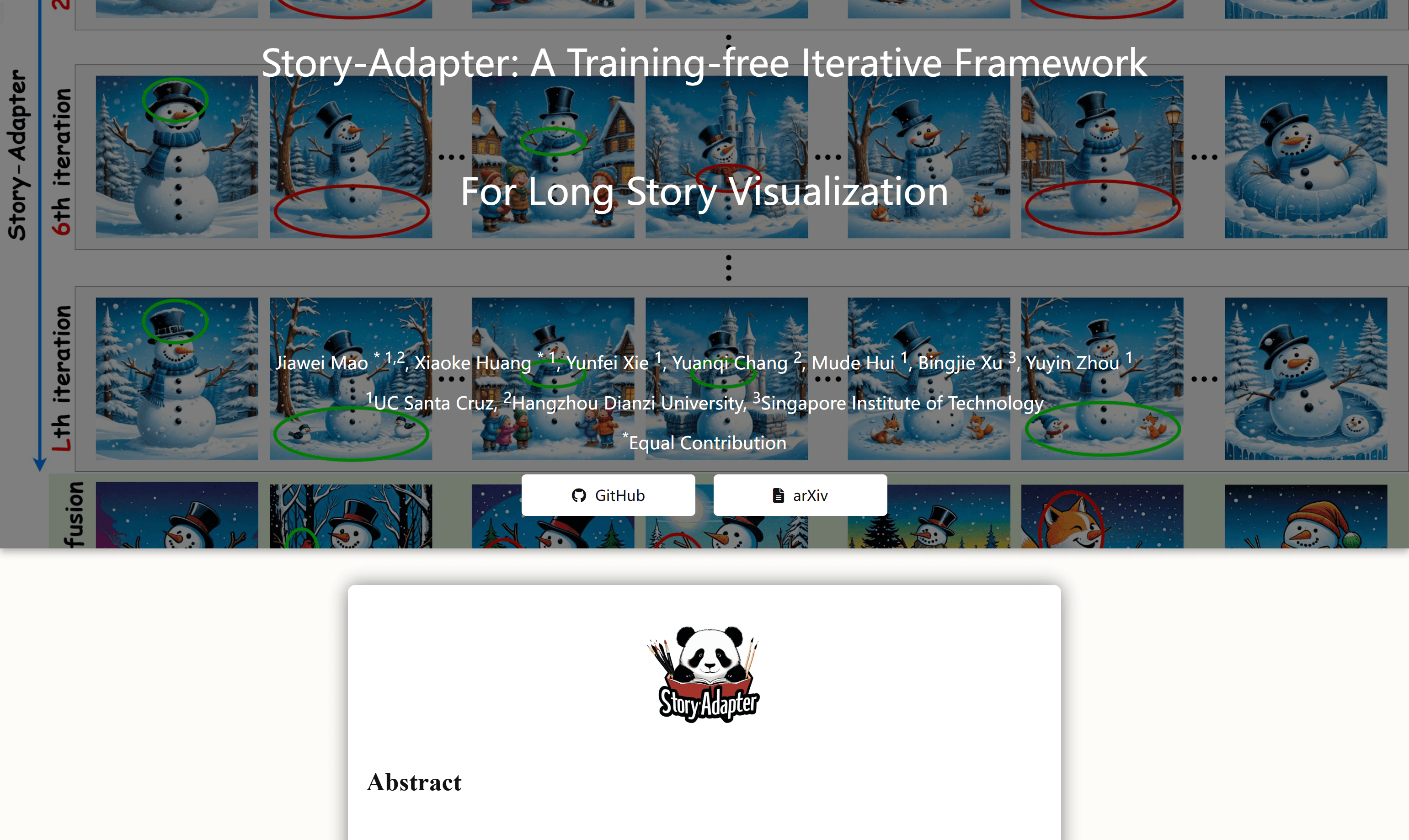

Story-Adapter is a training-free iterative framework designed for long story visualization. It optimizes the image generation process through iterative paradigms and global reference cross attention modules, maintains the coherence of semantics in the story while reducing computational costs. The importance of this technology is its ability to generate high-quality, detailed images in long stories, solving the challenges of traditional text-to-image models in long story visualization such as semantic consistency and computational feasibility.

Demand population:

"The target audience includes visual artists, game developers, animation makers, and any professionals who need long story visualization. Story-Adapter is suitable for them because it provides an efficient, training-free way to generate coherent and high-quality image sequences that are essential for creating long-form visual stories."

Example of usage scenarios:

- Artists use Story-Adapter to generate image sequences of long comic stories.

- Game developers use this framework to create coherent background images for game plots.

- Educators use Story-Adapter to create interactive image content for children's storybooks.

Product Features:

- No training required: Story-Adapter can be used without training, lowering the technical threshold.

- Iteration paradigm: Iteratively optimizes image generation to improve the quality of story visualization.

- Global reference cross attention module: aggregates all image information generated in the previous iteration to maintain the semantic coherence of the story.

- High-quality image generation: Generate high-quality, detailed images in long stories.

- Semantic consistency: Semantic coherence of images in stories through cross-attention mechanisms.

- Computational efficiency: Use global embedding to reduce computational costs and improve the ability to process long stories.

- Dynamic update: Each iteration uses the previous result as a reference to dynamically update the story visualization.

Tutorials for use:

1. Visit Story-Adapter 's GitHub page to get the project code.

2. Read the documentation to learn how to configure the environment and dependencies.

3. Prepare story text and corresponding prompts accordingly accordingly according to the document guidance.

4. Run the Story-Adapter framework, enter the story text and start the iteration process.

5. Observe the image results after each iteration and adjust the parameters as needed.

6. After completing the iteration, select the best result as the final image sequence.

7. Use the generated image sequence for further creation or research.