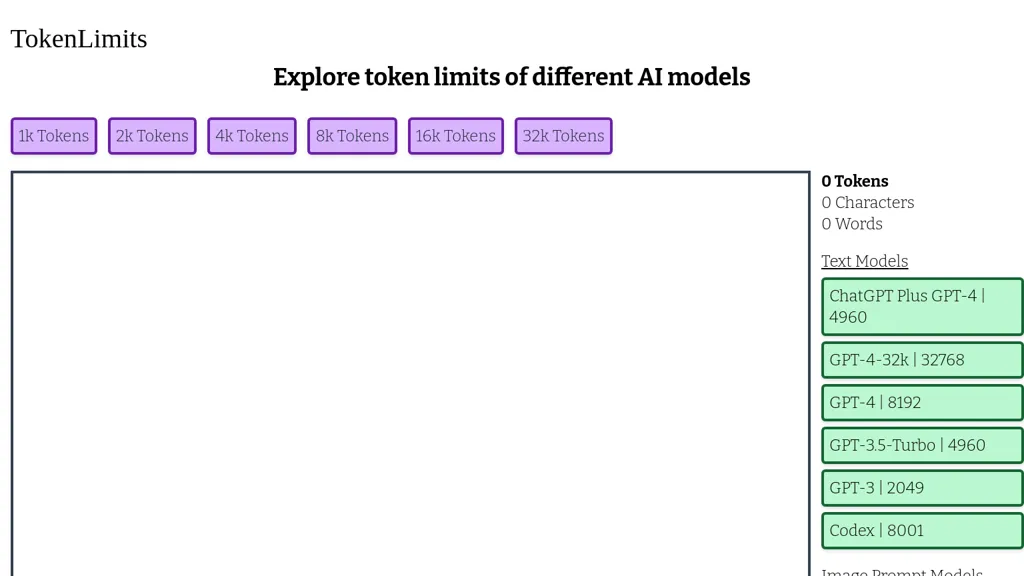

Tokenlimits

Tokenlimits offers insights into AI model token limits from 1k to 32k for GPT-4 and GPT-3.5-turbo helping developers optimize project inputs efficiently.

What is Tokenlimits?

Tokenlimits is a valuable tool for users exploring the token limits of various AI models such as GPT-4 and GPT-3.5-turbo. It offers a detailed overview of token thresholds ranging from 1k to 32k tokens. This resource is especially helpful for developers and researchers looking to optimize their projects by understanding the maximum input capacity.