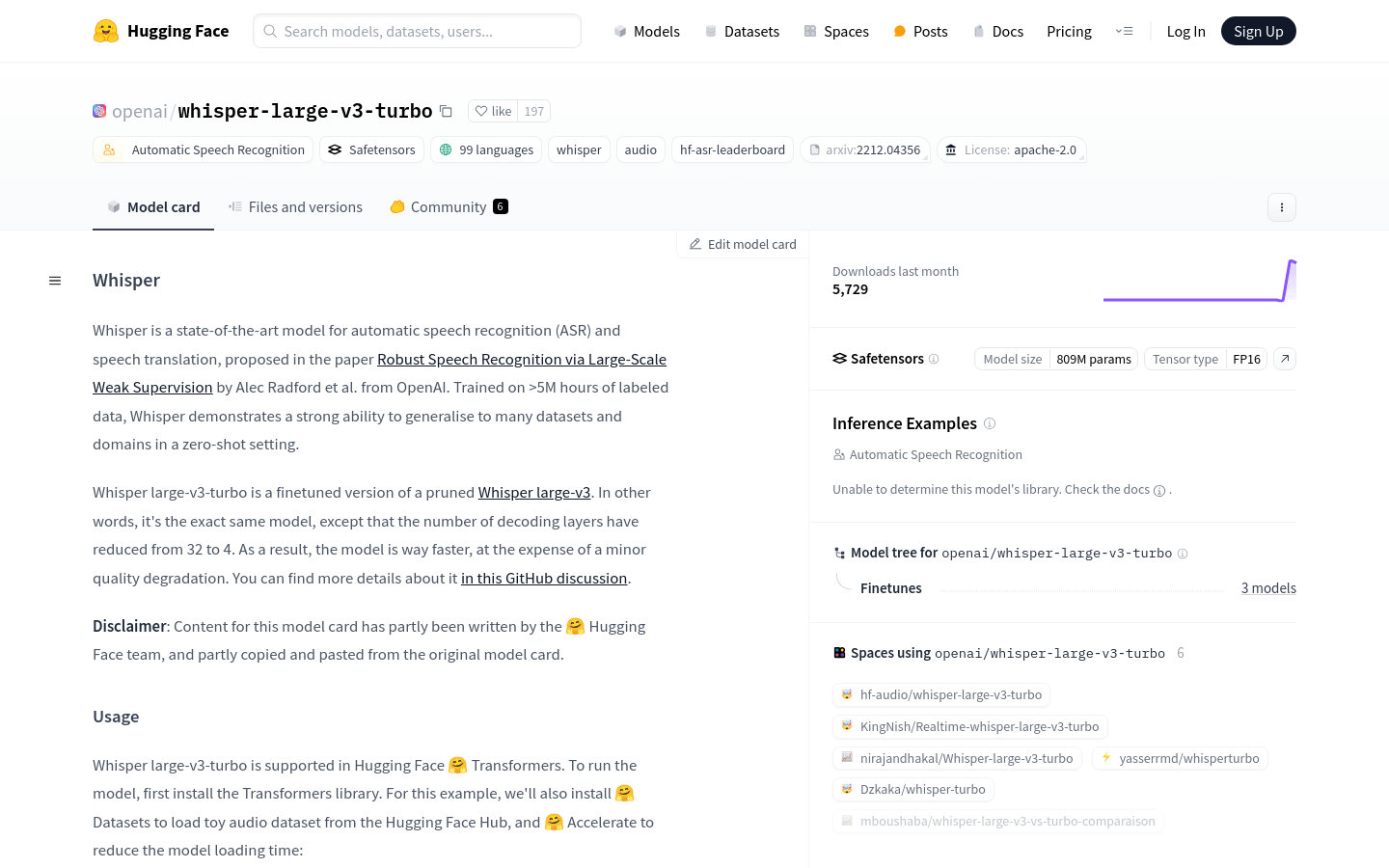

What is Whisper large-v3-turbo

Whisper large-v3-turbo is an advanced automatic speech recognition and translation model developed by OpenAI. It has been trained on over 5 million hours of labeled data, enabling it to generalize well across various datasets and domains without additional training. This model is a fine-tuned version of Whisper large-v3 with fewer decoding layers to enhance speed while maintaining high quality.

Who can benefit from using Whisper large-v3-turbo

The target audience includes AI researchers, developers, and businesses looking for efficient speech recognition solutions. It is particularly suitable for users who need to process large volumes of diverse audio content efficiently due to its multilingual support and rapid processing capabilities.

In what scenarios can Whisper large-v3-turbo be used

Whisper large-v3-turbo can be used in real-time speech-to-text conversion to improve meeting notes. It can also be integrated into mobile applications to provide multilingual voice translation services. Additionally, it is useful for transcribing and analyzing long-form audio content like interviews or lectures.

What are the key features of Whisper large-v3-turbo

Supports 99 languages for speech recognition and translation.

Can generalize to multiple datasets and domains without further training.

Improves model speed by reducing the number of decoding layers.

Handles long audio files by processing them in segments.

Compatible with all Whisper decoding strategies including temperature falloff and conditional generation based on previous tokens.

Automatically predicts the source audio language.

Supports tasks such as speech transcription and translation.

Provides time-stamped outputs at sentence or word level.

How do you use Whisper large-v3-turbo

1. Install the Transformers library along with Datasets and Accelerate libraries.

2. Load the model and processor using AutoModelForSpeechSeq2Seq and AutoProcessor from Hugging Face Hub.

3. Create a pipeline for automatic speech recognition.

4. Prepare your audio data, which could be sourced from the Hugging Face Hub or a local file.

5. Call the pipeline with your audio data to get the transcription results.

6. To enable additional decoding strategies, set generate_kwargs parameters.

7. For translation tasks, set the task parameter to 'translate'.

8. To get time-stamped outputs, set return_timestamps to True.