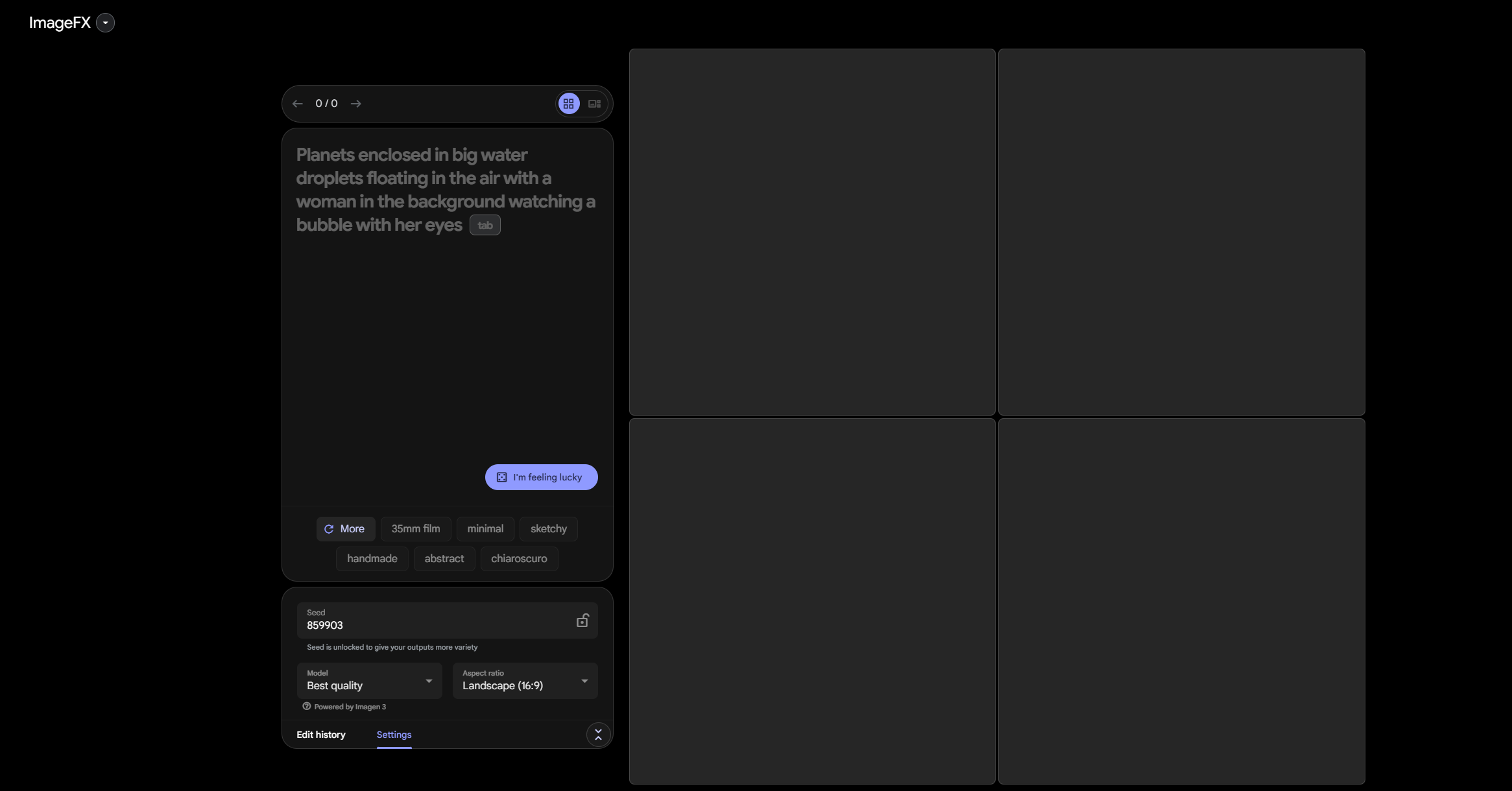

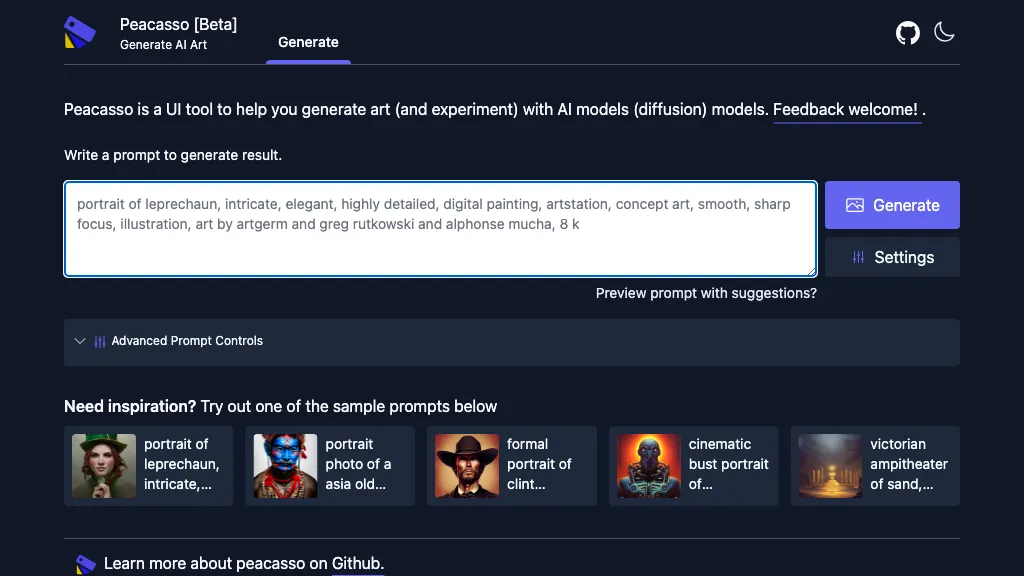

ComfyUI is a Node-based graphical user interface (GUI) designed for Stable Diffusion . It allows users to freely combine different image processing nodes through a modular way to create a highly flexible AI-generated image workflow. Compared with other Web UIs such as AUTOMATIC1111 , ComfyUI is lighter, faster to run, and has the characteristics of visualizing data flow . However, ComfyUI is difficult to get started and requires a certain learning cost.

If you want to deeply customize the Stable Diffusion generation process and have a clear control over the data flow, ComfyUI is a very worthwhile tool.

ComfyUI supports Windows, Mac and Linux , and the installation method is slightly different:

✅ Windows users : You can download the ComfyUI installation package directly from the official document, and then run ComfyUI .exe after decompression.

✅ Mac/Linux users : You need to manually install it through the command line . First download ComfyUI and decompress it, and then configure the Python dependency environment according to the official guide.

After the installation is completed, visit ** http://127.0.0.1:8188/** in the browser to enter the main interface of ComfyUI.

ComfyUI official download address: https://www.comfy.org/zh-cn/download

In ComfyUI , image generation is based on "node connection". Here are the basic steps for text-to-Image :

1️⃣Select model : In the Load Checkpoint node, load the downloaded Stable Diffusion model.

2️⃣Input prompt words : In the CLIP Text Encode (Prompt) node, enter the positive prompt word (Positive Prompt) and the negative prompt word (Negative Prompt).

3️⃣Run Generation : Click the Queue Prompt button to start image generation. The nodes with green borders in the interface will be activated in turn to indicate the current processing progress.

Tip: ComfyUI supports fully visual workflow management , which can intuitively adjust different parameters and node settings to achieve more refined image control.

ComfyUI itself does not contain any AI generation models, so you need to manually download the appropriate model file and store it in the ComfyUI 's model directory ( models/checkpoints/ ).

Model download source : You can search for Stable Diffusion -related models (such as SDXL, DreamShaper, etc.) on Hugging Face .

Download method : Some large models need to be downloaded using git-lfs , or directly accessed through Access Token.

Model format : ComfyUI is compatible with .ckpt and .safetensors file formats, download and unzip and place it in models/checkpoints/ folder.

Recommended model:

stable-diffusion-3-medium : suitable for high-quality universal image generation

dreamshaper : Focus on anime and art styles

SDXL : High Resolution Image Generation

Case 1: Generate landscape paintings

Select stable-diffusion-3-medium model

Positive prompt words: A beautiful landscape, mountains, rivers, blue sky, clouds

Negative prompt words: blurry, low quality, distortion

Run the generated to get a high-definition landscape picture

Case 2: Generate anime characters

Select the dreamshaper model

Positive prompt words: anime style, cute girl, long flowing hair

Negative prompt words: ugly, deformed

Get beautiful anime characters images after running

Case 3: Image Repair (Inpainting)

Select an existing picture

Select the area to be repaired in the Inpainting node

Enter a repair target, for example, restore missing parts with natural textures

After running, ComfyUI will automatically fill in missing areas

✅ Outpainting : Generate additional content based on existing pictures to expand the picture boundaries.

✅ Upscale (image enlargement) : Improve resolution and make the image clearer.

✅ Manager (Model Management) : Quickly switch and manage downloaded model files.

✅ Embeddings : used to fine-tune and optimize prompt word effects to improve the quality of AI generation.

Summary : ComfyUI is a highly flexible, visual and modular Stable Diffusion interface suitable for users who like to explore the details of AI generation . If you are interested in AI image generation, it is worth trying!