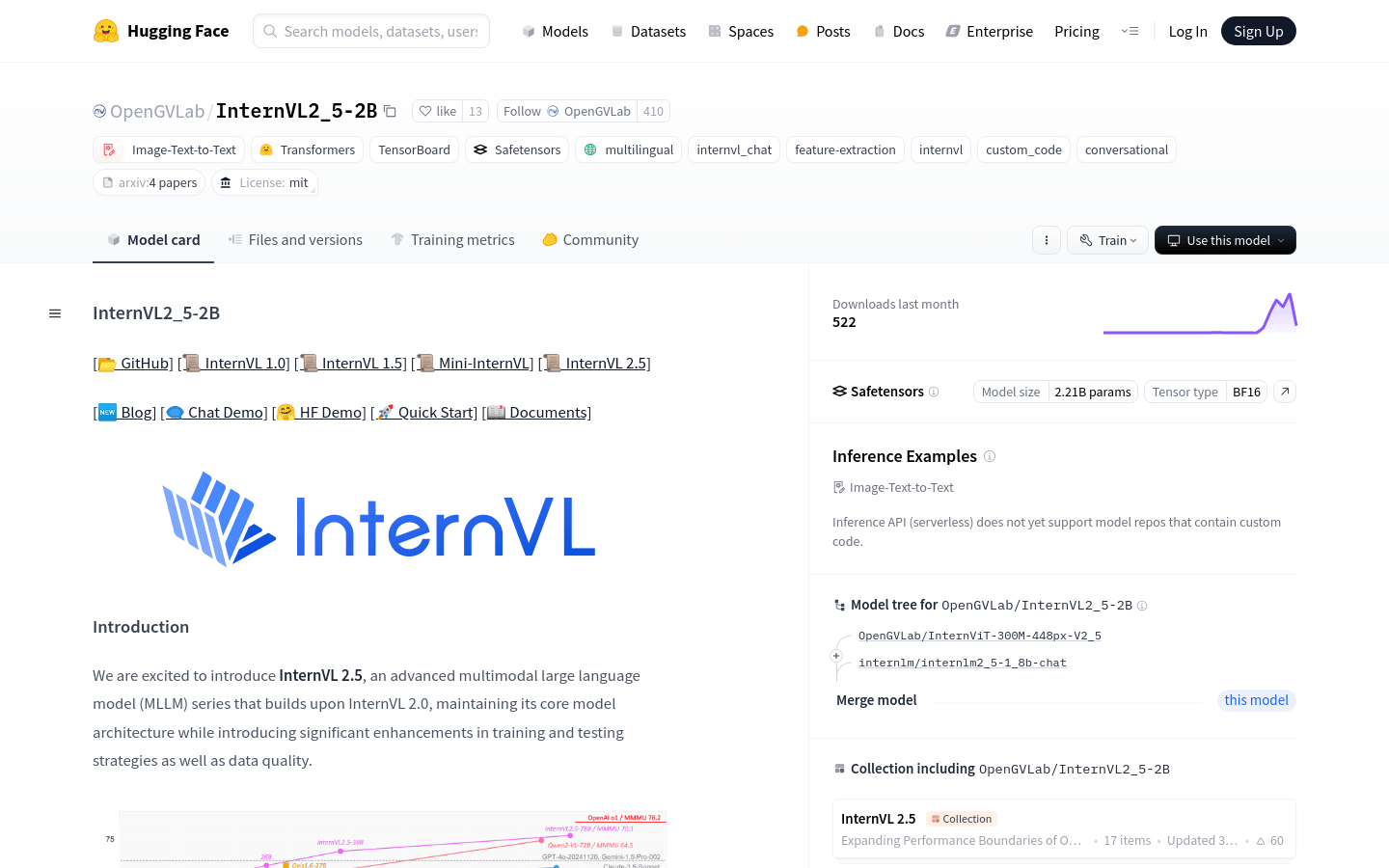

InternVL 2.5 is an advanced multimodal large language model family that maintains its core model architecture based on InternVL 2.0 by introducing significant training and testing strategy enhancements and data quality improvements. The model integrates new incremental pre-trained InternViT with various pre-trained large language models such as InternLM 2.5 and Qwen 2.5, using a randomly initialized MLP projector. InternVL 2.5 supports multi-image and video data, and has dynamic high-resolution training methods, which can provide better performance when processing multimodal data.

Demand population:

"The target audience is researchers, developers and enterprises, especially those that need to process and understand multimodal data such as image and text combination. With its powerful multimodal understanding and generation capabilities, InternVL2_5-2B is suitable for the development of intelligent image-text processing applications such as image description, visual question-and-answer and multimodal dialogue systems."

Example of usage scenarios:

Use the InternVL2_5-2B model to generate a detailed description of product images for e-commerce platforms.

In the field of education, this model is used to provide image-assisted language learning materials to enhance the learning experience.

In the field of security monitoring, abnormal behaviors can be automatically identified and responded to through video understanding capabilities.

Product Features:

Supports dynamic high-resolution training methods for multimodal data to enhance the model's ability to process multi-image and video data.

Using the 'ViT-MLP-LLM' model architecture, the visual encoder and language model are integrated, and cross-modal interaction is performed through the MLP projector.

Provides multi-stage training pipelines, including MLP warm-up, visual encoder incremental learning and full-model instruction adjustment to optimize the multimodal capabilities of the model.

Introduce an incremental expansion strategy to effectively align visual encoders with large language models, reduce redundancy and improve training efficiency.

Random JPEG compression and loss reweighting technology are used to improve the robustness of the model to noisy images and balance NTP losses of responses of different lengths.

An efficient data filtering pipeline is designed to remove low-quality samples and ensure the data quality of model training.

Tutorials for use:

1. Visit the Hugging Face website and search for InternVL2_5-2B model.

2. Download or use the model directly on the platform according to the required application scenarios.

3. Prepare input data, including images and related text.

4. Use the model's API interface to enter data and obtain model output.

5. Post-processing is performed based on the output results, such as formatting of text generation or parsing of image recognition results.

6. Integrate model output into the final application or service.